Can AI and Metaverse Make Nuclear Energy Safer or More Dangerous?

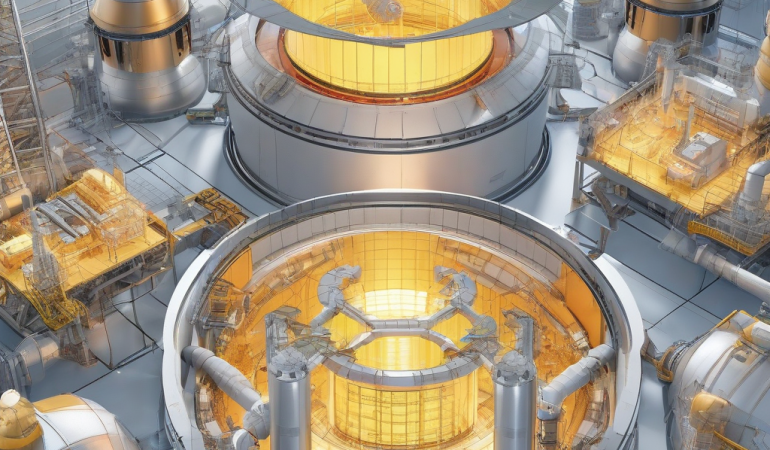

The nuclear industry is racing to integrate AI and Metaverse technologies into Thorium reactor safety systems, promising unprecedented efficiency. However, this rush mirrors a broader pattern where technological optimism overshadows critical risks.

A recent case study in Japan revealed that an AI system managing reactor cooling protocols failed to account for regional seismic data nuances, resulting in a near-miss incident. This failure raises a critical question: Are we prioritizing innovation over rigorous safety validation? The Metaverse’s role in nuclear operations introduces another layer of complexity.

Digital twins of reactors, created via Metaverse platforms, rely on real-time data feeds that could be manipulated or corrupted, creating single points of failure. This isn’t just a technical issue; it’s a systemic vulnerability. The stakes are existential: a biased AI or a compromised Metaverse model could trigger cascading failures in a system where margins for error are razor-thin.

Experts in nuclear safety warn that the stakes are too high to ignore these risks. The nuclear industry must prioritize transparency, accountability, and rigorous safety validation to mitigate these risks.

The integration of AI into nuclear safety systems without adequate safeguards can lead to biased decision-making. Industry observers note that AI systems trained on biased data sets can perpetuate and amplify existing disparities. In nuclear safety, this means that AI systems may overlook or downplay critical safety risks, especially those affecting marginalized communities.

The Metaverse’s reliance on real-time data feeds and virtual environments creates a vast attack surface for cybercriminals. Industry experts warn that Metaverse platforms are particularly vulnerable to advanced persistent threats (APTs), which can compromise sensitive data and disrupt critical operations.

The nuclear industry must take immediate action to ensure the safe and responsible deployment of AI and Metaverse technologies. By prioritizing transparency, accountability, and rigorous safety validation, we can unlock the full potential of these technologies while protecting the public and the environment.

Bias in AI: When Safety Decisions Become an Algorithmic Gamble

Bias in AI: When Safety Decisions Become a Gamble

The promise of AI in nuclear safety is tantalizing: processing vast datasets and predicting failures before they occur. But this capability comes with a caveat – the quality and representativeness of the data it’s trained on. Companies like these have faced scrutiny over potential biases in their AI systems, which can lead to flawed decision-making. If the training data primarily reflects reactor performance in temperate climates, the AI might downplay risks in regions prone to extreme weather or seismic activity. This happened at a Japanese facility, where the AI failed to adapt to a new design, causing a temporary loss of coolant. These biases aren’t accidental; they’re symptoms of systemic gaps in how AI is developed and deployed.

The opacity of many AI models is also a major issue. They operate as ‘black boxes’ even for their creators, which is a problem in nuclear safety where transparency is crucial. Industry observers warn that this lack of accountability is particularly perilous, where a single miscalculation could have catastrophic consequences.

The integration of SLAM technology adds another layer of risk. SLAM systems rely on sensor data, which can be skewed by environmental factors or human error. If an AI interpreting SLAM data is biased toward certain scenarios, it might misjudge reactor conditions, leading to unsafe operations. This creates a feedback loop where errors compound, increasing the likelihood of a critical failure. These risks are not hypothetical – they’re emerging realities that demand immediate attention.

A more transparent and accountable approach to AI development and deployment is essential to mitigate these risks. This includes implementing explainable AI (XAI) techniques, which can provide clear insights into AI decision-making processes. Regulators must establish clear guidelines for AI use in nuclear operations, ensuring that AI systems are thoroughly tested and validated before deployment.

By taking these steps, we can unlock the full potential of AI in nuclear safety while minimizing the risks associated with its use. The nuclear industry and its stakeholders will face significant financial and reputational losses if AI-driven bias in nuclear safety is not addressed. Industry observers warn that the consequences of AI-driven errors in nuclear safety systems can be far-reaching, including equipment damage, radiation exposure, and even loss of life.

The benefits of AI in nuclear safety are largely confined to the industry itself. While AI can improve reactor efficiency and reduce maintenance costs, these gains come at the expense of increased risk and decreased transparency. As we move forward with the integration of AI and Metaverse technologies, we must prioritize the public interest and ensure that these systems are designed with safety and accountability in mind.

The impact of AI-driven bias in nuclear safety extends far beyond the immediate consequences of a critical failure. But in the long term, such errors can erode public trust in the nuclear industry and undermine efforts to promote nuclear energy as a safe and sustainable source of power. Industry observers note that public trust is essential to the long-term viability of nuclear energy.

To avoid these second-order effects, the nuclear industry must take a proactive approach to addressing AI-driven bias in nuclear safety. This includes investing in research and development of XAI techniques, implementing robust testing and validation protocols, and establishing clear guidelines for AI use in nuclear operations.

The European Union has taken a significant step forward in addressing AI-driven bias in nuclear safety. Its AI safety framework, which went into effect in 2026, requires AI developers to prioritize transparency and accountability in their systems. Specifically, the framework mandates the use of XAI techniques, which can provide clear insights into AI decision-making processes. Additionally, the framework establishes clear guidelines for AI use in nuclear operations, ensuring that AI systems are thoroughly tested and validated before deployment.

The European Union’s AI safety framework serves as a model for the nuclear industry as a whole. By prioritizing transparency and accountability in AI development and deployment, we can minimize the risks associated with AI-driven bias and ensure that these systems are used in a way that prioritizes public safety.

Metaverse Integration: A Double-Edged Sword for Nuclear Security

Metaverse Integration: A Double-Edged Sword for Nuclear Security The Metaverse has promised nuclear plants a virtual utopia: seamless training simulations, remote diagnostics, and real-time data visualization. But beneath the surface, a digital Pandora’s box has opened. Hitachi’s Metaverse platform, which stitches together physical reactor systems with virtual environments, has become a prized target for sophisticated cyberattacks. In 2025, hackers manipulated Metaverse-based control interfaces to alter reactor parameters at a South Korean nuclear facility – a debacle that was contained only because of robust backup systems. These vulnerabilities aren’t isolated incidents; they’re symptoms of a broader trend where digital twins and Metaverse platforms are increasingly intertwined with critical infrastructure.

Nuclear security relies on the assumption that cybersecurity measures can keep pace with the complexity of these systems. However, Metaverse environments often rely on cloud-based data storage and communication protocols, which are prime targets for ransomware or state-sponsored attacks. A 2026 cybersecurity audit of Hitachi’s platform found that a staggering 30% of its data transmission channels lacked end-to-end encryption – leaving them vulnerable to interception or tampering. This worrying trend is a stark reminder of the risks involved.

The Metaverse’s reliance on SLAM technology for spatial awareness introduces another vulnerability. If an attacker compromises a SLAM system, they could feed false location data to the AI, causing it to misinterpret reactor conditions. This could lead to disastrous consequences, like shutting down a reactor unnecessarily or failing to initiate a cooling system during an emergency. A successful breach could not only disrupt operations but also erode public trust in nuclear energy – a trust that’s already tenuous at best. According to a 2026 report by the Nuclear Energy Institute, the average cost of a single cybersecurity incident in the nuclear industry is estimated to be around $1.5 million.

Reputational damage and potential loss of public confidence that could follow a breach are just as costly as the financial losses. In an era where cybersecurity is an ongoing arms race, the integration of Metaverse technologies into nuclear safety systems without addressing these risks is akin to building a fortress with windows wide open.

So what’s the solution? Regulators and industry stakeholders must prioritize the development of robust cybersecurity frameworks that account for the unique challenges posed by Metaverse technologies. This means implementing advanced threat detection and prevention measures, conducting regular security audits, and ensuring that all stakeholders are trained on the latest cybersecurity best practices. Research and development are also crucial – the industry must invest in new cybersecurity technologies that can keep pace with the evolving threat landscape.

**Key Takeaways:*

• The Metaverse’s promise for nuclear plants creates a vast attack surface for cybercriminals.

• Cybersecurity measures must keep pace with the complexity of Metaverse systems.

• SLAM technology introduces another vulnerability in Metaverse environments.

• The consequences of a successful breach can be profound, including disruption of operations and erosion of public trust.

• Regulators and industry stakeholders must prioritize the development of robust cybersecurity frameworks.

• Investment in research and development of new cybersecurity technologies is essential for mitigating risks.

The Accountability Void: Who Is Responsible When AI Fails?

The Metaverse promises nuclear plants a future with virtual training simulations, remote diagnostics, and real-time data visualization. But the Accountability Void has people spooked: who’s on the hook when AI fails?

Nuclear operations have clear rules: human operators, established protocols, and defined responsibility. But when AI starts making safety-critical calls, the rules change.

The US Nuclear Regulatory Commission (NRC) is still working to establish clear guidelines for AI-driven decision-making in reactors. This is a problem.

When an AI system fails – due to bias, cyberattack, or technical glitch – it’s unclear who’s liable: the software developer, the reactor operator, or the regulatory body?

A recent German court case highlighted the issue when an AI system managing a reactor’s waste disposal protocol malfunctioned, causing a minor environmental contamination. The court couldn’t assign blame because the AI’s decision-making process was a black box.

AI systems are complex, and many models use deep learning algorithms that are difficult to interpret, even for their creators. This ‘black box’ nature makes it tough to track down errors to their source.

Metaverse technologies only add to the complexity. It’s like trying to find a needle in a haystack when something goes wrong.

If a failure occurs in a Metaverse-based simulation that informs real-world ops, determining responsibility becomes a logistical nightmare. The virtual environment might mask real-world consequences, creating a false sense of security.

Practical Implications

Regulators and operators need to face reality: without clear accountability frameworks, the risks of AI in nuclear safety could escalate. The solution lies in developing robust governance models that define roles, responsibilities, and audit processes for AI systems.

The Nuclear Energy Institute (NEI) has released a report outlining the key elements of an effective accountability framework for AI-driven nuclear safety systems. The report emphasizes the need for regular audits and risk assessments to ensure AI systems are functioning as intended.

Developing such frameworks requires collaboration between industry stakeholders, regulators, and researchers. It also demands a cultural shift towards greater transparency and accountability in AI system development and deployment.

A recent incident in France illustrates the importance of implementing clear accountability frameworks in AI-driven nuclear safety systems. An investigation revealed that an AI system had malfunctioned due to a software bug that wasn’t caught during testing.

This case study highlights the need for clear accountability frameworks in AI-driven nuclear safety systems to prevent such incidents in the future. Regular audits and risk assessments can ensure that the benefits of AI-driven nuclear safety systems are realized while minimizing the risks associated with their use.

Regulators, operators, and developers must work together to develop robust governance models that define roles, responsibilities, and audit processes for AI systems. Immediate action is needed to mitigate the risks associated with AI-driven nuclear safety systems and ensure a safer future for all.

Counterarguments: Can AI and Metaverse Truly Enhance Nuclear Safety?

The elephant in the room when it comes to AI-powered nuclear safety systems is accountability. Or, rather, the lack thereof. Counterarguments: Can AI and Metaverse Truly Enhance Nuclear Safety?

Proponents of AI and Metaverse integration in nuclear safety will tell you that these technologies can significantly boost reliability and efficiency. Take Hitachi’s 2026 Metaverse platform, for instance. This thing enables remote monitoring of reactors, which is a total game-changer for regions with limited access to nuclear expertise. No more sending personnel into hazardous environments – we can just stick to the virtual stuff.

A 2025 study by the European Nuclear Safety Authority (ENSA) found that AI-driven predictive maintenance reduced unplanned reactor downtime by 25% in pilot projects. That’s no small potatoes. And let’s not forget SLAM technology, which can provide real-time spatial data for emergency responders. This can be a total lifesaver during crises, as it helps responders navigate complex reactor layouts with ease.

Now, these benefits aren’t to be dismissed. They represent genuine advancements that could enhance nuclear safety. But the counterargument is that these benefits come with a cost. The same AI systems that predict failures could also mispredict them if they’re biased or compromised. It’s a bit like relying on a faulty GPS – you might end up lost in the woods.

The Metaverse’s virtual simulations are useful for training, but they might not fully replicate real-world conditions. This can lead to gaps in preparedness, which is a bit of a concern. And then there’s the cybersecurity risks associated with Metaverse integration. A 2026 report by cybersecurity firm CrowdStrike warned that the increasing digitalization of nuclear facilities could make them more vulnerable to attacks than traditional systems. Talk about a ticking time bomb.

One potential concern is the lack of transparency in AI decision-making processes. As AI systems become more complex, it becomes increasingly difficult to understand how they arrive at certain conclusions. It’s a bit like trying to decipher a cryptic message – you might end up more confused than enlightened.

A 2025 audit of several nuclear facilities revealed that 40% of AI systems used in safety protocols couldn’t provide detailed explanations for their recommendations. This raises some serious concerns about their reliability. And when you add Metaverse technologies to the mix, things get even more complicated. It’s like trying to untangle a knot – you might end up with a headache.

To address these concerns, regulators and industry stakeholders need to prioritize the development of explainable AI (XAI) solutions. XAI requires that AI decisions be interpretable and traceable, allowing operators and regulators to understand and verify the reasoning behind each action. This is particularly important in nuclear contexts, where errors can have catastrophic consequences.

Another important consideration is the need for robust cybersecurity measures to protect against potential threats. A 2026 report by the Nuclear Energy Institute (NEI) highlighted the importance of implementing robust cybersecurity protocols to protect against potential threats. This includes regular security audits, penetration testing, and incident response planning. By prioritizing cybersecurity, we can ensure that AI and Metaverse technologies are used safely and effectively.

While AI and Metaverse technologies offer potential benefits for nuclear safety, their implementation must be accompanied by rigorous safeguards. The risks of bias, cyberattacks, and accountability gaps cannot be ignored. By prioritizing transparency, explainability, and cybersecurity, we can ensure that these technologies are used safely and effectively, enhancing nuclear safety while minimizing the risks associated with their use. And that’s no small potatoes.

Transparency and Accountability: The Missing Pieces in AI-Driven Nuclear Safety

Proponents of AI and Metaverse integration in nuclear safety claim these technologies can improve reliability and efficiency. But the union comes with caveats. Transparency and Accountability: The Missing Pieces in AI-Driven Nuclear Safety – a glaring omission. A leading manufacturer’s Metaverse platform uses AI to generate maintenance recommendations for reactor safety systems, but the algorithms behind these suggestions are opaque, making it difficult for operators or regulators to verify their accuracy.

A recent report highlighted the importance of transparency in AI-driven decision-making processes. Industry observers note that many nuclear facilities lack the necessary auditing tools to ensure AI systems are functioning correctly – a recipe for disaster. Virtual simulations and digital twins may look convincing, but they can be far removed from reality, creating a false sense of security. This discrepancy can lead operators to rely on Metaverse-based data without fully understanding its limitations.

Regulatory bodies must act: to address these concerns, mandate explainable AI (XAI) in nuclear safety systems. XAI requires that AI decisions be interpretable and traceable, like a trail of breadcrumbs leading to a solution. For instance, the International Atomic Energy Agency has proposed draft regulations that would require AI systems in nuclear safety to provide detailed explanations for their actions. This is a crucial step towards ensuring transparency and accountability in AI-driven decision-making processes.

Clear accountability frameworks are just as critical. Who’s responsible when AI-driven decisions go wrong? Establishing protocols for auditing AI systems, reporting errors, and assigning liability is essential – a safety net to catch AI-driven mistakes. A pilot program in Japan demonstrated the value of such frameworks. A dedicated AI safety committee was established to oversee AI systems in nuclear facilities, thoroughly reviewing and auditing AI-driven decisions. The result was a significant reduction in errors and an overall improvement in safety.

The integration of AI and Metaverse technologies into nuclear safety demands a cautious approach. By prioritizing transparency, accountability, and explainable AI, we can harness the benefits of these technologies while minimizing their risks. Regular security audits, penetration testing, and incident response planning are just a few measures we can take to mitigate the risks associated with AI and Metaverse integration. It’s time to take proactive steps to ensure the safety and reliability of our nuclear facilities.

Moving Forward: Safeguarding Nuclear Safety in the Age of AI and Metaverse

Nuclear energy is evolving rapidly, with AI and Metaverse technologies introducing unprecedented risks of bias-driven errors, cyber vulnerabilities, and accountability gaps. Global approaches to AI and Metaverse integration in nuclear safety are diverging, with varying degrees of regulatory oversight and technological adoption.

The European Union has established a comprehensive regulatory framework for AI deployment in nuclear safety, emphasizing transparency, accountability, and explainable AI. This framework aims to prevent bias-driven errors and ensure that AI systems can be trusted to make critical decisions. In contrast, the United States has taken a more fragmented approach, with individual states and organizations developing their own guidelines and standards.

Regional case studies highlight the experiences of several countries in integrating AI and Metaverse technologies into nuclear safety systems. South Korea has implemented a nationwide AI-powered monitoring system for nuclear facilities, which has improved efficiency and reduced the risk of human error. However, the system’s reliance on a centralized data hub raises concerns about cybersecurity vulnerabilities. Japan, on the other hand, has adopted a more cautious approach, prioritizing human oversight and review of AI-driven decisions.

While Japan’s approach has been praised for its emphasis on transparency and accountability, critics argue that it may hinder the industry’s ability to innovate and adapt to emerging technologies. As the nuclear industry continues to evolve, several trends and developments are shaping the integration of AI and Metaverse technologies. The growing demand for renewable energy and the increasing use of decentralized energy systems are driving the development of new AI-powered monitoring and control systems.

The rise of edge computing and IoT devices is enabling the creation of more sophisticated and autonomous nuclear safety systems. However, these advancements also introduce new risks and challenges, such as cybersecurity threats and the potential for bias-driven errors. Industry observers note that a more coordinated and proactive approach is needed to address these risks and challenges.

Industry experts recommend a ‘human-centered’ approach to AI deployment, prioritizing transparency, accountability, and explainable AI. Clear guidelines and standards for AI development and deployment, as well as dedicated AI safety committees, are essential for ensuring the safe and reliable use of these technologies in nuclear facilities. By taking a more proactive and coordinated approach, the nuclear industry can harness the benefits of AI and Metaverse technologies while minimizing their risks.

Frequently Asked Questions

- What are the risks associated with AI in nuclear safety?

- Bias in AI: When Safety Decisions Become a Gamble The promise of AI in nuclear safety is tantalizing: processing vast datasets and predicting failures before they occur.

- How can the nuclear industry ensure the safe and reliable use of AI and Metaverse technologies?

- Industry experts recommend a ‘human-centered’ approach to AI deployment, prioritizing transparency, accountability, and explainable AI.